本次安装采用的操作系统是Ubuntu 20.04。

更新一下软件包列表。

sudo apt-get update使用命令安装Java 8。

sudo apt-get install -y openjdk-8-jdk配置环境变量。

vi ~/.bashrc

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64让环境变量生效。

source ~/.bashrc从Hadoop官网Apache Hadoop下载安装包软件。

或者直接通过命令下载。

wget https://dlcdn.apache.org/hadoop/common/hadoop-3.3.4/hadoop-3.3.4.tar.gz

伪分布式是在一个节点上运行多个进程来模拟集群。

Hadoop伪分布式集群的运行,需要配置密钥对实现免密登录。

$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/wux_labs/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/wux_labs/.ssh/id_rsa

Your public key has been saved in /home/wux_labs/.ssh/id_rsa.pub

The key fingerprint is:

SHA256:rTJMxXd8BoyqSpLN0zS15j+rRKBWiZB9jOcmmWz4TFs wux_labs@wux-labs-vm

The key's randomart image is:

+---[RSA 3072]----+

| .o o o. |

| ..o.+o. .... |

| o.*+.oo. o o |

| . BoEo+o . o |

| OoB.=S . |

| o.Ooo... |

| o o+ o. |

| . + o |

| ...o |

+----[SHA256]-----+cp ~/.ssh/id_rsa.pub ~/.ssh/authorized_keys将安装包解压到目标路径。

mkdir -p apps

tar -xzf hadoop-3.3.4.tar.gz -C apps

bin目录下存放的是Hadoop相关的常用命令,比如操作HDFS的hdfs命令,以及hadoop、yarn等命令。

etc目录下存放的是Hadoop的配置文件,对HDFS、MapReduce、YARN以及集群节点列表的配置都在这个里面。

sbin目录下存放的是管理集群相关的命令,比如启动集群、启动HDFS、启动YARN、停止集群等的命令。

share目录下存放了一些Hadoop的相关资源,比如文档以及各个模块的Jar包。

配置环境变量,主要配置HADOOP_HOME和PATH。

vi ~/.bashrc

export HADOOP_HOME=/home/wux_labs/apps/hadoop-3.3.4

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH让环境变量生效:

source ~/.bashrc除了配置环境变量,伪分布式模式还需要对Hadoop的配置文件进行配置。

$ vi $HADOOP_HOME/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export HADOOP_HOME=/home/wux_labs/apps/hadoop-3.3.4

export HADOOP_CONF_DIR=/home/wux_labs/apps/hadoop-3.3.4/etc/hadoop$ vi $HADOOP_HOME/etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://wux-labs-vm:8020</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/wux_labs/data/hadoop/temp</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

</configuration>$ vi $HADOOP_HOME/etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/wux_labs/data/hadoop/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/wux_labs/data/hadoop/hdfs/data</value>

</property>

</configuration>$ vi $HADOOP_HOME/etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>$HADOOP_HOME/share/hadoop/mapreduce/*:$HADOOP_HOME/share/hadoop/mapreduce/lib/*</value>

</property>

</configuration>$ vi $HADOOP_HOME/etc/hadoop/yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>wux-labs-vm</value>

</property>

</configuration>在启动集群前,需要对NameNode进行格式化,命令如下:

hdfs namenode -format执行以下命令启动集群。

start-all.sh

上传一个文件到HDFS。

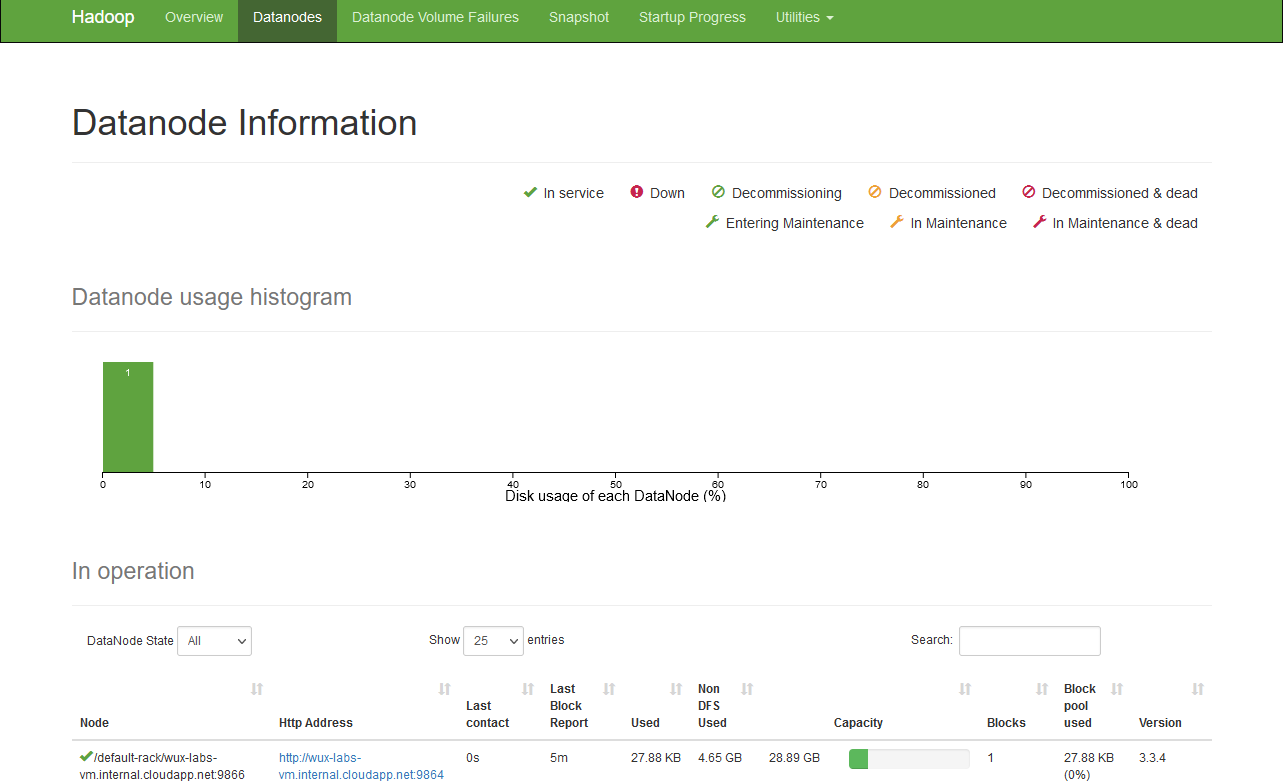

hdfs dfs -put .bashrc /打开HDFS Web UI查看相关信息,默认端口9870。

打开YARN Web UI查看相关信息,默认端口8088。

操作HDFS使用的命令是hdfs,命令格式为:

Usage: hdfs [OPTIONS] SUBCOMMAND [SUBCOMMAND OPTIONS]支持的Client命令主要有:

Client Commands:

classpath prints the class path needed to get the hadoop jar and the required libraries

dfs run a filesystem command on the file system

envvars display computed Hadoop environment variables

fetchdt fetch a delegation token from the NameNode

getconf get config values from configuration

groups get the groups which users belong to

lsSnapshottableDir list all snapshottable dirs owned by the current user

snapshotDiff diff two snapshots of a directory or diff the current directory contents with a snapshot

version print the version操作HDFS使用的命令是yarn,命令格式为:

Usage: yarn [OPTIONS] SUBCOMMAND [SUBCOMMAND OPTIONS]

or yarn [OPTIONS] CLASSNAME [CLASSNAME OPTIONS]

where CLASSNAME is a user-provided Java class支持的Client命令主要有:

Client Commands:

applicationattempt prints applicationattempt(s) report

app|application prints application(s) report/kill application/manage long running application

classpath prints the class path needed to get the hadoop jar and the required libraries

cluster prints cluster information

container prints container(s) report

envvars display computed Hadoop environment variables

fs2cs converts Fair Scheduler configuration to Capacity Scheduler (EXPERIMENTAL)

jar <jar> run a jar file

logs dump container logs

nodeattributes node attributes cli client

queue prints queue information

schedulerconf Updates scheduler configuration

timelinereader run the timeline reader server

top view cluster information

version print the versionyarn jar 可以执行一个jar文件。

创建一个input目录。

hdfs dfs -mkdir /input将Hadoop的配置文件复制到input目录下。

hdfs dfs -put apps/hadoop-3.3.4/etc/hadoop/*.xml /input/以下命令用于执行一个Hadoop自带的样例程序,统计input目录中含有dfs的字符串,结果输出到output目录。

yarn jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.4.jar grep /input /output 'dfs[a-z.]+'

在YARN上可以看到提交的Job。

执行结果为:

$ hdfs dfs -cat /output/*

1 dfsadmin

1 dfs.replication

1 dfs.namenode.name.dir

1 dfs.datanode.data.dir同样执行Hadoop自带的案例,计算圆周率。

yarn jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.4.jar pi 10 10执行结果为:

$ yarn jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.4.jar pi 10 10

Number of Maps = 10

Samples per Map = 10

Wrote input for Map #0

Wrote input for Map #1

Wrote input for Map #2

Wrote input for Map #3

Wrote input for Map #4

Wrote input for Map #5

Wrote input for Map #6

Wrote input for Map #7

Wrote input for Map #8

Wrote input for Map #9

Starting Job

... ...

Job Finished in 43.768 seconds

Estimated value of Pi is 3.20000000000000000000在YARN上可以看到提交的Job。